Lenovo ThinkSystem SR680a V3 AI サーバー | 8x NVIDIA HGX H200 141GB |デュアル Xeon プラチナ 8568Y+ | 2TB DDR5

ご安心ください。返品を承ります。

配送:国際発送の場合、関税手続きや追加料金が発生する場合があります。 詳細を見る

配達:国際配送が税関手続きの対象となる場合は、追加のお時間をいただく場合があります。 詳細を見る

返品:14日以内の返品が可能です。返品送料は販売者が負担します。 詳細を見る

送料無料。当社はNET 30 Daysの購入注文を受け付けています。信用情報に影響を与えることなく、数秒で決定を得られます。

大量の Lenovo ThinkSystem SR680a V3 製品が必要な場合は、フリーダイヤル Whatsapp: (+86) 151-0113-5020 までお電話いただくか、ライブチャットでお見積もりをご依頼ください。担当営業マネージャーがすぐにご連絡いたします。

Lenovo ThinkSystem SR680a V3 AI Server | 8x NVIDIA HGX H200 141GB | Dual Xeon Platinum 8568Y+ | 2TB DDR5

Keywords

Lenovo ThinkSystem SR680a V3, NVIDIA HGX H200, AI Training Server, Intel Xeon Platinum 8568Y+, ConnectX-7 NDR InfiniBand, BlueField-3 DPU, High-Performance Computing, 2TB DDR5 RAMDescription

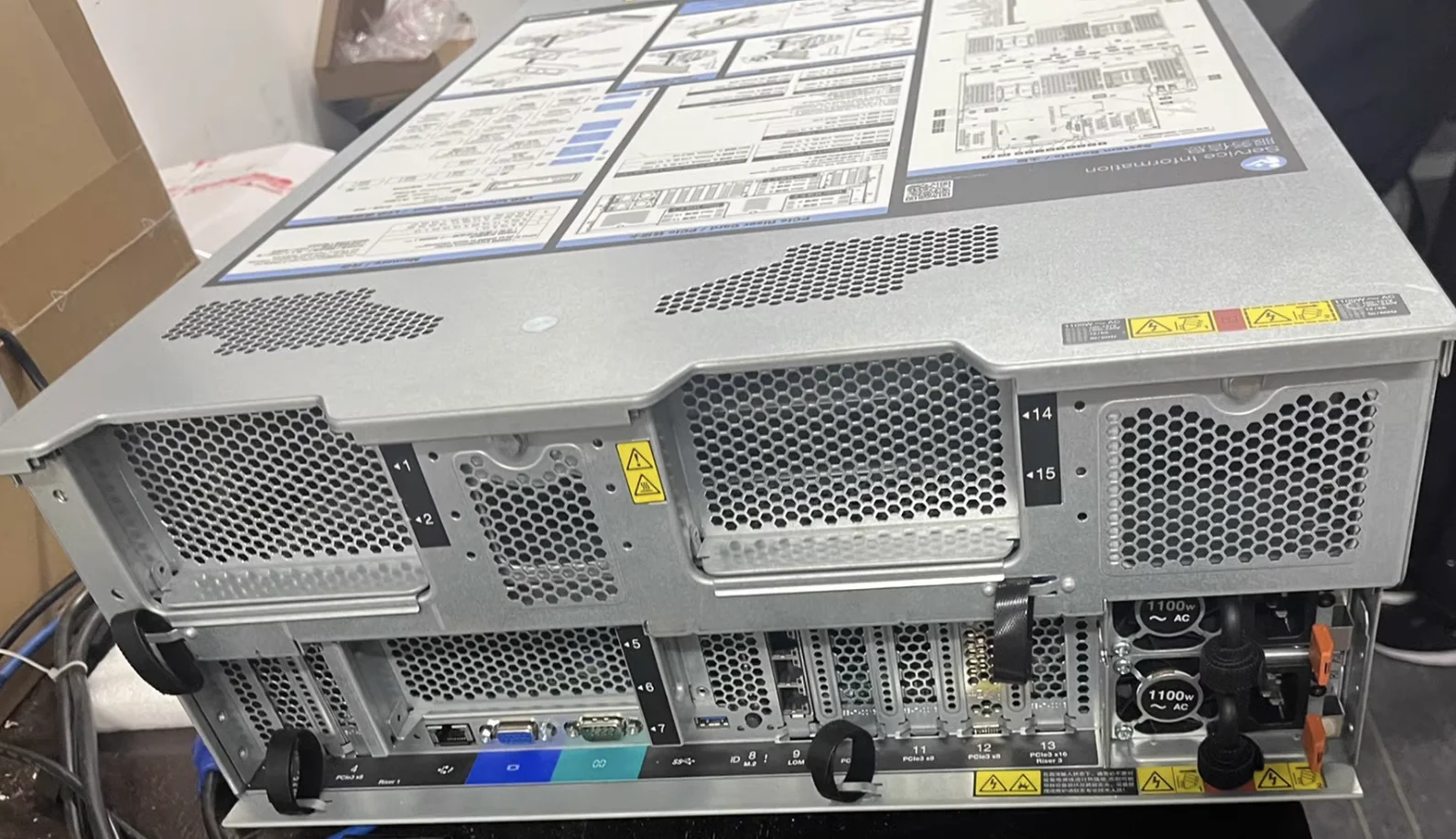

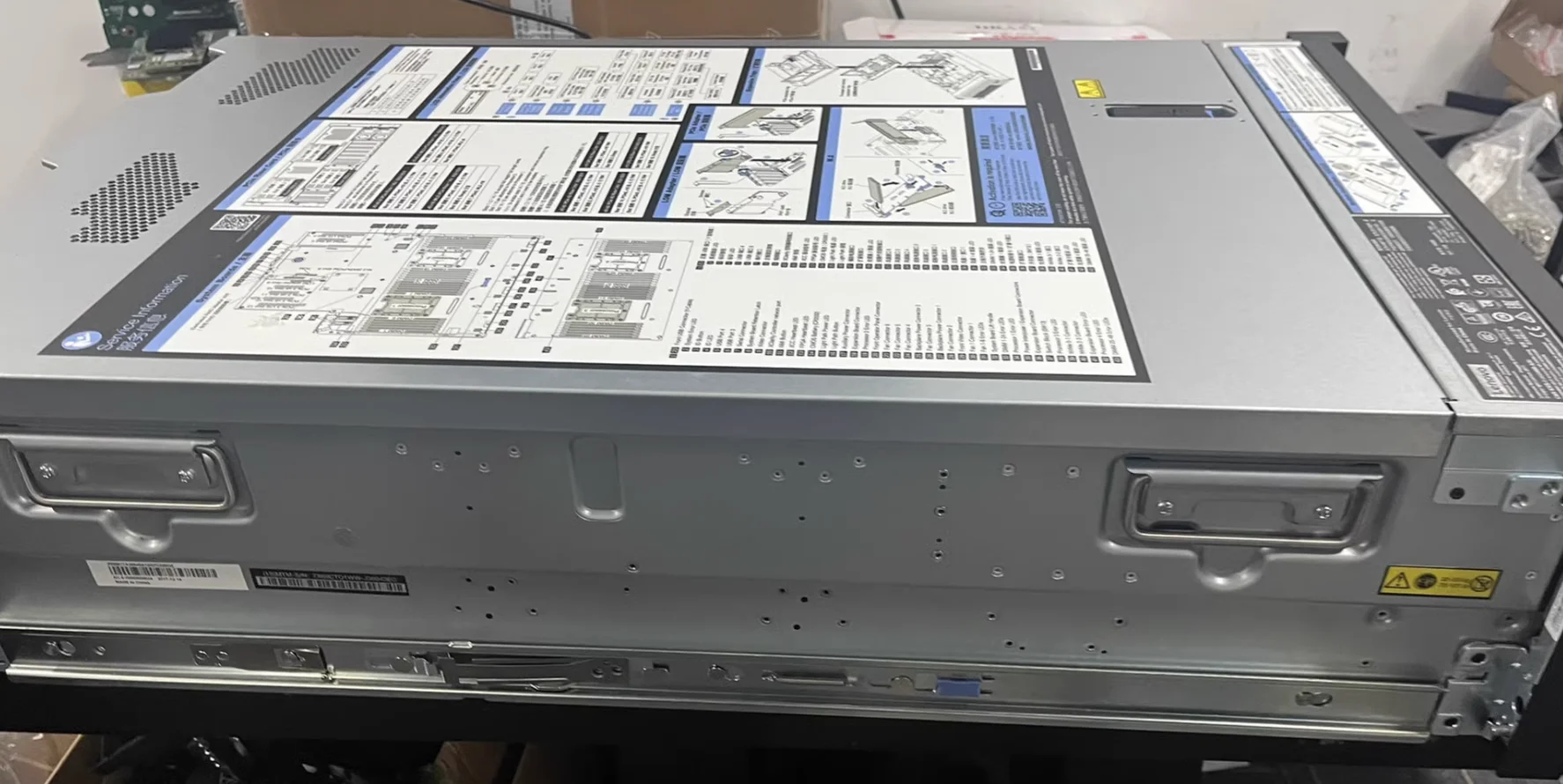

Step into the next generation of artificial intelligence and hyperscale computing with the Lenovo ThinkSystem SR680a V3. Designed to tackle the world's most complex computational challenges, this flagship 8U server acts as the ultimate powerhouse for generative AI, deep learning, and advanced analytics. It is anchored by a robust x86 compute node featuring dual Intel Xeon Platinum 8568Y+ processors. With 48 cores per socket and a 350W TDP, these 5th Generation Emerald Rapids CPUs provide the immense orchestration and data preprocessing capabilities required to keep the GPU subsystem constantly fed.

The crown jewel of this AI Training Server is the NVIDIA HGX H200 8-GPU baseboard. Representing a massive leap over previous generations, each H200 GPU is equipped with 141GB of ultra-fast HBM3e memory. Operating at 700W, these eight interconnected GPUs function as a single monolithic accelerator, providing an unprecedented memory pool and bandwidth. This is the definitive architecture for running and fine-tuning trillion-parameter Large Language Models (LLMs) without being bottlenecked by memory capacity.

To ensure zero bottlenecks between the processors and accelerators, the system is populated with 2TB DDR5 RAM utilizing 32 high-speed 64GB TruDDR5 5600MHz RDIMMs. The storage architecture is equally aggressive. Operating system stability is guaranteed by two 960GB M.2 NVMe SSDs running in a secure RAID 1 array via Intel VROC. For high-speed data ingestion and model checkpointing, the server features eight 3.84TB U.2 NVMe PCIe 4.0 SSDs, delivering blistering read/write performance directly to the compute plane.

Networking in this High-Performance Computing marvel is engineered for massive scale-out clusters. It includes eight ConnectX-7 NDR InfiniBand OSFP400 adapters, providing a dedicated 400Gb/s link for every single GPU. This 1:1 ratio is critical for the NVIDIA Magnum IO architecture, allowing GPUs across different servers to communicate bypassing the CPU. Furthermore, north-south traffic and infrastructure management are offloaded to a BlueField-3 DPU (200G), freeing up CPU cycles and enhancing zero-trust security.

Sustaining this level of extreme performance requires enterprise-grade power and thermal engineering. The SR680a V3 is equipped with eight 2600W Titanium Hot-Swap power supplies, configured for N+N redundancy with over-subscription capabilities. This ensures that even during extreme transient power spikes characteristic of intense AI workloads, the system remains stable. Paired with Lenovo's advanced front and rear fan control boards, this server delivers uncompromising reliability in modern high-density datacenters.

Key Features

- Unprecedented AI Acceleration: 8x NVIDIA HGX H200 GPUs, each with 141GB HBM3e memory (700W per GPU).

- Elite Orchestration: Dual Intel Xeon Platinum 8568Y+ CPUs (48 Cores, 2.3GHz, 350W).

- Massive Memory Footprint: 2048GB (32x 64GB) of TruDDR5 5600MHz ECC RDIMM memory.

- Rail-Optimized Networking: 8x NVIDIA ConnectX-7 NDR 400Gb/s InfiniBand adapters for 1:1 GPU fabric.

- Infrastructure Offloading: NVIDIA BlueField-3 200G DPU for enhanced security and network efficiency.

- High-Speed NVMe Storage: 8x 3.84TB U.2 PM9A3 NVMe SSDs + 2x 960GB M.2 OS boot drives in Hardware RAID 1.

- Mission-Critical Power: 8x 2600W Titanium Gen2 Power Supplies with N+N redundancy.

Configuration

| Component | Description / Part Number |

|---|---|

| System Base | ThinkSystem SR680a V3 H200 GPU Base (C1EL) |

| Processors (CPU) | 2x Intel Xeon Platinum 8568Y+ 48C 350W 2.3GHz (BYWF) |

| GPU Board | ThinkSystem NVIDIA HGX H200 141GB 700W 8-GPU Board (C1HM) |

| Memory (RAM) | 32x 64GB TruDDR5 5600MHz (2Rx4) RDIMM - Total 2TB (C5H9) |

| Data Storage | 8x 2.5" U.2 PM9A3 3.84TB Read Intensive NVMe PCIe 4.0 SSD (BXM9) |

| OS Boot Storage | 2x M.2 PM9A3 960GB NVMe SSD (BXMH) with Intel VROC RAID 1 (BS7M, BS7F) |

| Backend Network (GPU) | 8x NVIDIA ConnectX-7 NDR OSFP400 1-Port InfiniBand Adapter (BQ1N) |

| Frontend Network (DPU) | 1x NVIDIA BlueField-3 B3220 VPI QSFP112 2P 200G Adapter (BVBG) |

| Power Supplies | 8x 2600W 230V Titanium Hot-Swap Gen2 Power Supply v4 (C4HK) |

| Management & Security | TPM 2.0 with Secure Boot (BPKQ), Front Operator Panel LCD (BAVU) |

Compatibility

The Lenovo ThinkSystem SR680a V3 is designed as the premier hardware foundation for the NVIDIA AI Enterprise software platform. It is fully certified for modern enterprise operating systems including Ubuntu Server LTS and Red Hat Enterprise Linux (RHEL). The ConnectX-7 NDR InfiniBand adapters are designed to interface seamlessly with NVIDIA Quantum-2 NDR switches to achieve full 400Gb/s throughput. Furthermore, the BlueField-3 DPU is fully supported by the NVIDIA DOCA software framework and VMware vSphere for next-generation software-defined datacenter architectures.

Usage Scenarios

This configuration is the gold standard for an AI Training Server handling Foundation Models. The transition from H100 to NVIDIA HGX H200 introduces 141GB of memory per GPU. This massive increase means data scientists can train and fine-tune Large Language Models (LLMs) with hundreds of billions of parameters using less complex tensor parallelism, significantly accelerating time-to-market for proprietary AI solutions.

In the realm of Generative AI Inference, this system shines as a high-throughput engine. Serving complex multimodal models (text, image, and video generation) requires immense memory bandwidth to prevent latency bottlenecks. The H200's HBM3e memory ensures that token generation for concurrent user requests happens in real-time, providing a seamless end-user experience for enterprise AI applications.

For High-Performance Computing (HPC), this server bridges the gap between AI and traditional scientific simulation. Applications in computational fluid dynamics, weather modeling, and molecular dynamics (such as AlphaFold) can leverage the 8x GPU interconnect and the vast 2TB DDR5 RAM to process massive datasets that would cripple standard CPU-based clusters.

Finally, this system is architected for massive scale-out AI factories. By utilizing the eight ConnectX-7 NDR InfiniBand adapters, organizations can string together hundreds of SR680a V3 nodes into a SuperPOD. The BlueField-3 DPU concurrently acts as a security perimeter, isolating tenant workloads in cloud environments and offloading NVMe-oF (NVMe over Fabrics) storage tasks so the Intel Xeon Platinum 8568Y+ processors remain dedicated solely to compute orchestration.

Frequently Asked Questions

Q: What is the main advantage of the H200 over the H100 in the Lenovo ThinkSystem SR680a V3? A: The primary advantage is memory capacity and bandwidth. The H200 features 141GB of ultra-fast HBM3e memory, compared to the 80GB of HBM3 found on the H100. This nearly doubles the capacity per GPU, allowing massive AI models to fit into the VRAM of a single node, which dramatically improves inference speeds and training efficiency.

Q: Why does this server include eight ConnectX-7 NDR InfiniBand cards and a BlueField-3 DPU? A: The eight ConnectX-7 cards provide a "rail-optimized" backend fabric. This means every single GPU has its own dedicated 400Gb/s network pipe to communicate with GPUs in other servers, enabling limitless scaling. The BlueField-3 DPU handles the frontend network, managing client requests, storage access, and security firewalls, completely offloading these tasks from the host CPUs.

Q: How is the storage configured for redundancy and performance? A: Operating system resilience is handled by two 960GB M.2 NVMe SSDs configured in a hardware-level RAID 1 using Intel VROC, ensuring the server stays online even if a boot drive fails. The eight 3.84TB U.2 NVMe drives are typically passed through directly to the operating system or clustered file system to provide the highest possible IOPS for feeding training data to the GPUs.

Q: What are the power and facility requirements for this system? A: This server is extremely power-dense. It features eight 2600W Titanium power supplies (operating at 200V+ high voltage) configured for N+N redundancy. It is designed for modern, high-density datacenter racks capable of providing and cooling 10kW to 15kW of power per server. Standard office or low-density server room power circuits are insufficient for this hardware.

この商品に関連する製品

-

Lenovo ThinkSystem SR680a V3 AI Server 8U Rack wit... - 品番: SR680AV3...

- 在庫状況:In Stock

- 状態:新品

- 定価:$329,999.00

- 販売価格: $328,999.00

- 節約額 $1,000.00

- 今すぐチャット メールを送信

-

ThinkSystem SR665V3 2U Rack Server 24 Bay 2.5 Inch... - 品番: SR665V3...

- 在庫状況:In Stock

- 状態:新品

- 定価:$5,999.00

- 販売価格: $5,599.00

- 節約額 $400.00

- 今すぐチャット メールを送信

-

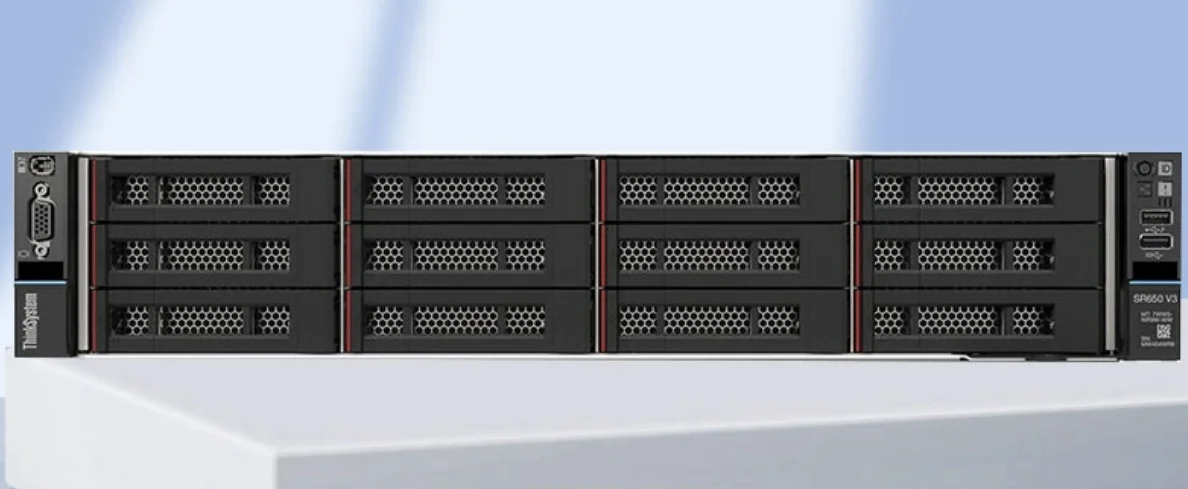

Lenovo ThinkSystem SR650 V3 2U ラック サーバー - デュアル Xeo... - 品番: Lenovo ThinkSystem S...

- 在庫状況:In Stock

- 状態:新品

- 定価:$13,199.00

- 販売価格: $12,999.00

- 節約額 $200.00

- 今すぐチャット メールを送信

-

Dell PowerEdge R760xs 第 16 世代 2U ラック サーバー - デュアル X... - 品番: R760xs...

- 在庫状況:In Stock

- 状態:新品

- 定価:$14,199.00

- 販売価格: $13,999.00

- 節約額 $200.00

- 今すぐチャット メールを送信

-

HPE ProLiant サーバー DL-360-G11 – ラック (SFF) 2*6544Y/3... - 品番: DL360 Gen11...

- 在庫状況:In Stock

- 状態:新品

- 定価:$23,888.00

- 販売価格: $21,666.00

- 節約額 $2,222.00

- 今すぐチャット メールを送信

-

Lenovo SR650 2U ラック サーバー デュアル Xeon Gold 6248R 256G... - 品番: Lenovo SR650...

- 在庫状況:In Stock

- 状態:新品

- 定価:$18,999.00

- 販売価格: $18,699.00

- 節約額 $300.00

- 今すぐチャット メールを送信

-

Dell PowerEdge R750XS 2U ラック サーバー、Xeon Gold 6330、1... - 品番: Dell PowerEdge R750X...

- 在庫状況:In Stock

- 状態:新品

- 定価:$9,299.00

- 販売価格: $9,199.00

- 節約額 $100.00

- 今すぐチャット メールを送信

-

Dell PowerEdge R7525 AMD EPYC 2U ラック サーバー – 256GB ... - 品番: Dell PowerEdge R7525...

- 在庫状況:In Stock

- 状態:新品

- 定価:$7,799.00

- 販売価格: $7,699.00

- 節約額 $100.00

- 今すぐチャット メールを送信